Previously I've talked about

Automatic Differentiation (AD). My own formulation of the technique is more algebraic than the description that is usually given, and recently it's begun to dawn on me that all I've done is rediscover Synthetic Differential Geometry (SDG). I've looked at a variety of SDG papers but none of them seem to make the connection with AD. This is a pity because SDG allows you to do an amazing thing - write computer programs that easily manipulate objects such as vector fields and differential forms on manifolds without doing symbolic algebra. My goal here is to illustrate how the definition of vector field in Anders Kock's

book gives a nice functional definition of a vector field and how that definition leads naturally to the Lie bracket. Normally it takes a significant amount of mathematical machinery to define these things but in the framework of SDG it becomes very simple. (Unfortunately this is going to be very sketchy as there's a lot I could say and I only have a small amount of time while I sit at home waiting for a new TV to be delivered...)

Kock's work is based on the idea of infinitesimal numbers whose square is zero but which aren't themselves zero. Clearly we aren't talking about real numbers here, but instead an extension to the real numbers. Here's a toy implementation:

> data Dual a = D a a deriving (Show,Eq)

> instance Num a => Num (Dual a) where

> fromInteger i = D (fromInteger i) 0

> (D a a')+(D b b') = D (a+b) (a'+b')

> (D a a')-(D b b') = D (a-b) (a'-b')

> (D a a')*(D b b') = D (a*b) (a*b'+a'*b)

> e = D 0 1

They're called

dual numbers because this particular algebra was apparently first described by Clifford and that's what he called them. The important point to note is that

e^2==0. We can use the fact that

f(x+e)=f(x)+ef'(x)

to compute derivatives of functions. For example if we define

> f x = x^3+2*x^2-3*x+1

and evaluate

f (1+e) we get

D 1 4. We can directly read off f(1)=1 and f'(1)=4.

But, as Kock points out, the dual numbers don't provide 'enough' infinitesimals, we just get one, e, from which all of the others are built. So we can replace Dual with a new class:

> infixl 5 .-

> infixl 5 .+

> infixl 6 .*

> infixl 6 *.

> data R a = R a [a] deriving Show

> zipWith' f l r [] [] = []

> zipWith' f _ r a [] = map (flip f r) a

> zipWith' f l _ [] b = map (f l) b

> zipWith' f l r (a:as) (b:bs) = (f a b) : zipWith' f l r as bs

> (.+) :: Num a => [a] -> [a] -> [a]

> (.+) = zipWith' (+) 0 0

> (.-) :: Num a => [a] -> [a] -> [a]

> (.-) = zipWith' (-) 0 0

> (.*) :: Num a => a -> [a] -> [a]

> (.*) a = map (a*)

> (*.) :: Num a => [a] -> a -> [a]

> (*.) a b = map (*b) a

> (.=) :: (Eq a,Num a) => [a] -> [a] -> Bool

> (.=) a b = and $ zipWith' (==) 0 0 a b

> instance (Eq a,Num a) => Eq (R a) where

> R a a' == R b b' = a==b && a'.=b'

A slightly more extended version of the Num interface:

> instance Num a => Num (R a) where

> fromInteger a = R (fromInteger a) []

> (R a a') + (R b b') = R (a+b) (a'.+b')

> (R a a') - (R b b') = R (a-b) (a'.-b')

> (R a a') * (R b b') = R (a*b) (a.*b' .+ a'*.b)

> negate (R a a') = R (negate a) (map negate a')

And some more functions for good measure:

> instance (Num a,Fractional a) => Fractional (R a) where

> fromRational a = R (fromRational a) []

> (R a a') / (R b b') = let s = 1/b in

> R (a*s) ((a'*.b.-a.*b') *. (s*s))

> instance Floating a => Floating (R a) where

> exp (R a a') = let e = exp a in R e (e .* a')

> log (R a a') = R (log a) ((1/a) .* a')

> sin (R a a') = R (sin a) (cos a .* a')

> cos (R a a') = R (cos a) (-sin a .* a')

The

d i provide a basis for these infinitesimals.

> d n = R 0 (replicate n 0 ++ [1])

We now have an infinite set of independent dual numbers,

d i, one for each natural i. Note that we have

(d i)*(d j)==0 for any i and j. Apart from this, they act just like before. For example

f(x+di)=f(x)+dif'(x).

We can use these compute partial derivatives. For example consider

> g x y = (x+2*y)^2/(x+y)

Computing

g (7+d 0) (0+d 1) gives

R 7.0 [1.0,3.0] so we know g(7,0) = 7, g

x(7,0)=1 and g

y(7,0)=3.

I'll use R as mathematical notation for the type given by

R Float. (I hope the double use of 'R' won't be confusing, but I want to be consistent-ish with Kock.)

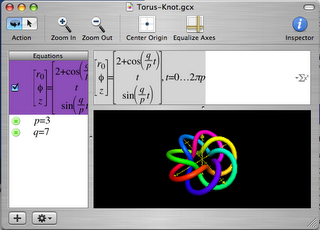

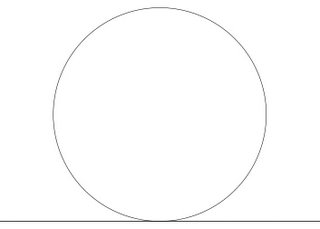

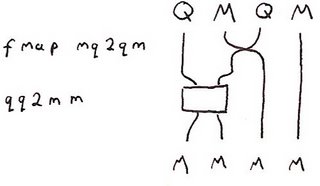

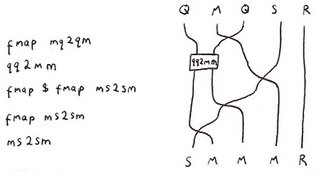

Before we do any more calculus, consider the following geometric figure:

A point is in the intersection of the circle and the line if it satisfies the equations:

> onS (x,y) = x^2+(y-1)^2==1

> onL (x,y) = y==0

Notice that in R this has many solutions. In fact, we find that

onS (a*d 1,0) && onL (a*d 1,0) gives True for any Float value a. Does this mean anything? Actually, it fits some people's intuition of what the intersection should look like better than the usual idea that there is only one intersection point. In fact, Protagoras argued that there was a short line segment in common between the circle and the line and that because Pythogoras's theorem gave only one solution the whole of geometry was at fault! Using R instead of the reals makes sense of this intuition. The points of the form (a*d 1,0) do, indeed, form a line tangent to the circle. In fact, there is a way to construct tangent spaces and vector fields directly from this - but instead I want to now consider Kock's construction which is slightly different.

Let D be the subset of R consisting of numbers whose square is zero. These numbers are in some sense infinitesimal. In differential geometry a vector field is intuitively thought of as an 'infinitesimal flow' on a manifold. A finite flow on a manifold, M, is simply a function f:

R×M→M such that f(x,0)=0. In f(x,t) we can think of t as time and each point x on the manifold flows along a path t→f(x,t). If f is differentiable, then as t becomes smaller, the path becomes closer and closer to a short line segment which we can draw as a vector. So the intuition is that a vector field is what you get in the limit as t becomes infinitesimal. The catch is that infinitesimal things aren't first class objects in differential geometry (so to speak). You can use infinitesimals for intuition, and I suspect almost all differential geoneters do, but actually talking about them is taboo. In fact, the definition of vector field in differential geometry is a bit of a kludge to work around this issue. When most people first meet the definition of a vector field as a differential operator it comes as quite a shock. But we have no such problem. In fact, Kock defines a vector field simply as a function

f:D×M→M

such that f(0,x)=x.

In the language of SDG, a vector field

is an infinitesimal flow. For example, consider

> transX d (x,y) = (x+d,y)

This simply translates along the x-axis infinitesimally. Of course this code defines a flow for non-infinitesimal values of d, but when considering this as a vector field we're only interested in when d is infinitesimal. transX defines a vector field that everywhere points along the x-axis.

For those familiar with the usual differential geometric definition of vector fields I can now make contact with that. In that context a vector field is a differential operator that acts on scalar functions on your manifold. In our case, we can think of it acting by infinitesimally dragging the manifold and then looking at what happens to the function that's dragged along. In other words, if we have a funtion, f, on the manifold, the vector field v, applied to f, is that function vf such that

f (f d x)==f x + d*vf x. For example with

> h (x,y) = x^2+y^2

h (transX (d 1) (1,2)) == R 5 [2] because h(x,y)=5 and h

x(x,y)=2.

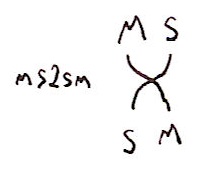

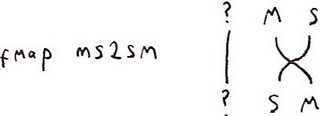

I think I have enough time to briefly show how to define the

Lie bracket in this context. If you have two vector fields, then intuitively you can view the Lie bracket as follows: take a point, map it using the first field by some small parameter d1, then map it using the second for time d2, then doing the first one by parameter -d1, and then the second by -d2. Typically you do not return to where you started from, but somewhere 'close'. In fact, your displacement is proportional to the product of d1 and d2. In other words, if your fields are v and w, then the Lie bracket [v,w] is defined by the equation

v (-d2) $ v (-d1) $ w d2 $v d1 x==vw (d1*d2) x where

vw represents [v,w]. But you might now see a problem. The way we have defined things

d1*d2 is zero if d1 and d2 are infinitesimal. Kock discusses this issue and points out that in order to compute Lie brackets we need infinitesimals whose squares are zero, but whose product isn't. I could go back and hack the original definition of R to represent a new kind of algebraic structure where this is true, but in the spirit of

Geometric Algebra for Free I have a trick. Consider d

1=1⊗d and d

2=d⊗1 in R⊗R. Clearly d

12=d

22=0. We also have d

1d

2=d⊗d≠0. And using the constructor as tensor product trick we can write:

> d1 = R (d 0) [] :: R (R Double)

> d2 = R 0 [1] :: R (R Double)

Let's test it out. Define three infinitesimal rotations:

> rotX d (x,y,z) = (x,y+d*z,z-d*y)

> rotY d (x,y,z) = (x-d*z,y,z+d*x)

> rotZ d (x,y,z) = (x+d*y,y-d*x,z)

Note that there's no need to use trig functions here. We're working with infinitesimal rotations so we can use the fact that sin d=d and cos d=1 for infinitesimal d. It is well known that [rotX,rotY]=rotZ and cyclic permutations thereof. (In fact, these are just the commutation relations for

angular momentum in quantum mechanics.)

We can write

> commutator d1 d2 f g = (g (-d2) . f (-d1) . g d2 . f d1)

> test1 = commutator d1 d2 rotX rotY

> test2 = rotZ (d1*d2)

and compare values of test1 and test2 at a variety of points to see if they are equal. For example

test1 (1,2,3) == test2(1,2,3). I don't know about you, but I'm completely amazed by this. We can get our hands right on things like Lie brackets with the minimum of fuss. This has many applications. An obvious one is in robot dynamics simulations where we frequently need to work with the Lie algebra of the group of rigid motions. This approach won't give us new algorithms, but it gives a very elegant way to express existing algorithms. It's also, apprently, close to

Sophus Lie's original description of the Lie bracket.

I have time for one last thing. So far the only manifolds I've considered are those of the form

Rn. But this stuff works perfectly well for varieties. For example, the rotations rotX, rotY and rotZ all work fine on the unit sphere. In fact, if T is the unit sphere define

> onT (x,y,z) = x^2+y^2+z^2==1

Notice how, for example,

onT (rotY (d 1) (0.6,0.8,0))==True, so rotY really does give an infinitesimal flow from the unit sphere to the unit sphere, and hence a vector field on the sphere. Compare this to the complexity of the traditional definition of a vector field on a manifold.

Kock's book also describes a really beautiful definition of the differential forms in terms of infinitesimal simplices, but I've no time for that now. And I've also no time to mention the connections with intuitionistic logic and category theory making up much of his book (which is a good thing because I don't understand them).

PS The D⊗D trick, implemented in C++, is essentially what I did in my

paper on photogrammetry. But there I wasn't thinking geometrically.

(I don't think I've done a very good job of writing this up. Partly I feel horribly constricted by having to paste back and forth between two text editors to get this stuff in a form suitable for blogger.com as well as periodically having to do some extra munging to make sure it is still legal literate Haskell. And diagrams - what a nightmare! So I hit the point where I couldn't face improving the text any more without climbing up the walls. What

is the best solution to writing literate Haskell, with embedded equations, for a blog?)