People who have just implemented interpreters and compilers are like people who've just had babies - they become monomaniacs incapable of talking about anything else, and showing you their latest pictures at any available opportunity. But I'm going to spare you the pictures. (Hmmm...does blogger.com support the classification of posts by type?)

My typeless pure functional lazy call-by-name language seems to be working out quite well so I thought I'd talk about two aspects of it:

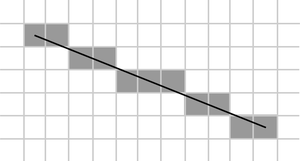

Firstly: my goal has been to construct something as simple as possible that is as powerful as possible. I've basically got it down to just five instructions: push an integer onto the stack, push a pointer to a function, push a duplicate of the nth item down on the stack, push a closure and tidy up a stack frame. Those 5 instructions are then translated into C. Everything else is provided by libraries (eg. plus, minus, seq) or is part of the runtime (ie. garbage collector and the loop that decides which reduction to perform next). The core compiler is basically ten lines of code - the other 300 lines are mainly the parser that has to deal with the quirkiness of any Haskell-like syntax. Yet with this tiny compiler that compiles to just these five operations I've found it possible to write things like a

monadic parser, implement monad transformers, and do simple logic programming using the list monad. I'm astonished by how far you can get with so little programming. The moral is, starting from next to nothing you get a lot more bang for your buck with functional programming. (Sometimes it even competes tolerably, performancewise, with

Hugs).

Secondly: I thought I'd mention my handling of monads. The compiler only supports three types - integers, pairs and functions so we have no type inferencing tricks to generate the glue to make monads as easy to use as they are in Haskell. Nonetheless I eventually figured out a way to make monads almost as painless as in Haskell. There were two things I needed to do:

(A) Redefine the semantics of " {" and "}". In Haskell anything between "do {" and "}" is glued together using the bind function where bind is overloaded. What I did was similar. Anything between "{" and "}" is glued together using a function. But I don't use the bind function. Instead I define "{ <statements> }" to be a function that uses its argument to bind the statements together. It's then easy to define something like:

do f = f bind.

and we end up with code that looks like Haskell's again. Unfortunately this definition is only useful in the presence of polymorphism because it commits us to using the global 'bind' function. So the way to deal with this is to

(B) Reify monads. I simply define a monad to be the pair (bind:return) where bind and return are the bind and return funtions for the monad. (I use ':' to define pairs as in my language non-null lists are simply pairs constructed form the head and tail of the list.) I can then define do as

do monad f = f (head monad).

return monad = tail monad.

So now the price I have to pay for the lack of types is just that I have to parameterise uses of do and return with the monad. This isn't too onerous, for example you get code looking like:

putLine l = if l then

doIO {

putChar (head l);

putLine (tail l)

}

else

returnIO 0.

where I've defined doIO = do IO etc.

It gets a little more complex with monad transformers. As monads are reified, a monad transformer in my language is an actual function that maps a monad to a monad. For example, here's part of the definition of the StateT monad transformer:

returnStateT monad x s = return monad (x:s).

bindStateT monad m k s = bind monad (m s) (\as -> k (head as) (tail as)).

doStateT monad f = f (bindStateT monad).

-- Actually construct the transformed monad.

StateT monad = bindStateT monad : returnStateT monad.

In some ways these definitions are actually simpler than the corresponding Haskell definitions. In Haskell you communicate information to the compiler about which bind and return to use by your choice of type. So you find yourself repeatedly wrapping and unwrapping objects (eg. using runStateT). No such issue here, a sequence of statements in the state monad can simply be applied immediately to the input state.

Anyway, the monad transformer

examples I came up with earlier now carry over to my new language like so:

prog = do (StateT State) {

liftStateT State (modify (plus 1));

a <- liftStateT State get;

modifyT State (plus 10);

b <- getT State;

liftStateT State $ put (a+b);

c <- getT State;

return (StateT State) $ c+1

}.

Note that I chose to make everything explicit. I could easily have made local definitions like lift = liftStateT State to make things look more like Haskell.

Anyway, I'm pretty pleased. With a few hours of coding here and there over a period of two weeks I've written my first compiler of standalone executables in 20 years and it's already at the stage where it wouldn't be hard to port its own compiler to itself. (My previous compiler was an adventure game DSL written in BASIC and based on the one

here (though I don't think the acronym 'DSL' existed then). I also implemented Forth on a

Psion II a bit later but it's debatable whether you'd call indirect threading by the name 'compilation'.)

I'll probably put a release on my web site some time in the next week. Not that it's actually useful for anything...

And all this computer science is too much. I really must get back to some real mathematics some time soon...