Haskell is so strict about type safety that randomly generated snippets of code that successfully typecheck are likely to do something useful, even if you've no idea what that useful thing is. This post is about what I found when I tried playing with the monad

1 ListT [] even though I had no clue what purpose such a monad might serve.

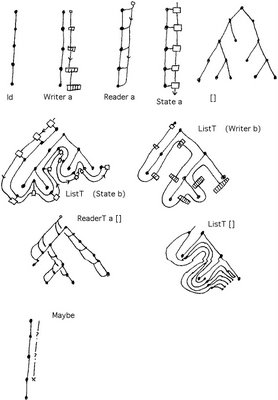

I've talked about using monad transformers a couple of times. One time I looked at

compositions of various types of side-effect monad, and more recently I looked at compositions of the

List monad with side-effects monads. So it was inevitable that I got onto composing the List monad with itself to get

ListT [].

This is literate Haskell, modulo the unicode characters.

So start with the the ordinary List monad, which is all about considering choices. For example the expression

> import Data.List

> import Control.Monad.Writer

> import Control.Monad.List

> test1 = do

> x <- [1,2,3]

> return (2*x)

> go1 = test1

can be interpreted as a program that chooses x to be each of the values 1, 2 and 3 in turn, applies f to each one and collects up the result to give

[2,4,6]. The List monad will happily make choices within choices. So the expression

> test2 = do

> x <- [1,2]

> y <- [3,4]

> return (x,y)

> go2 = test2

considers, for each possible choice of x, each possible choice of y. The result is

[(1,3),(1,4),(2,3),(2,4)]. More generally, for fixed sets a,b,...c the expression

do

x <- a

y <- b

…

z <- c

return (a,b,…,c)

returns the cartesian product a×b×…×c. In the fully general case, a,b,…c could all depend on choices that were made earlierbut right now I'm talking about the case when a,b,…,c are all 'constant'.

Here's a slightly different way of looking at this. Suppose a person called Player (P for short) is playing a solitaire game. At the first turn, P chooses a move from the set of possible first moves, then chooses a possible move from the set of second moves and so on. We can see that if we write the code

do

move1 <- possible_moves_1

move2 <- possible_moves_2

…

last_move <- possible_last_moves

return (move1,move2,…,last_move)

the result is a list, each of whose elements is the sequence of possible plays in the game. If the

possible_*; are all 'constant' then its just the cartesian product of the plays at each turn. But it's straightforward to adapt this code to enumerate all possible plays in a more general solitaire game - and that's exactly how the List monad is sometimes used to solve such games.

So if List is about making choices, composing List with itself might seem to be about making two types of choice. And the most familiar situation where you need to iterate over two possible types of choices comes when you consider two player games. So I'll tell you a possible answer now:

ListT [] is the game analysis monad!

Let's look at some examples of code in the

ListT [] monad. As I pointed out

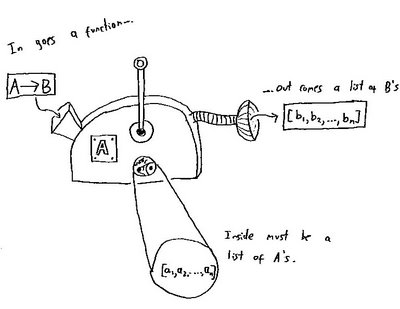

earlier, we use

lift a when we want a to be a list in the inner monad and

mlist a when we want a to be a list in the outer monad. So in

ListT [] we can expect to use both of these with lists. Consider

> mlist :: MonadPlus m => [a] -> m a

> mlist = msum . map return

> test3 = do

> a <- lift [1,2]

> b <- mlist [3,4]

> return (a,b)

> go3 = runListT test3

The value is [[(1,3),(1,4)],[(2,3),(2,4)]]. There's a nice interpretation of this. Introduce a new player called Strategist (S for short). Think of

lift as meaning a choice for S and

mlist as a choice for P. We can read the above as: for each first play a that S could make, go through all the first plays b that P could make. What's different from the solitaire example is how the plays have been grouped in the resulting list. For each decision S could have made, all of P's possible plays have been listed together in a sublist. But now consider this expression:

> test4 = do

> b <- mlist [3,4]

> a <- lift [1,2]

> return (b,a)

> go4 = runListT test4

We get: [[(3,1),(4,1)],[(3,1),(4,2)],[(3,2),(4,1)],[(3,2),(4,2)]]. Maybe you expect to see the same result as before but reordered. Instead we have a longer list. Our interpretation above no longer seems to hold. But actually, we can salvage it?

Go back to thinking about solitaire games. We can read the code for test2 as follows: First choose a value for x, ie. x=1. Now choose a value for y, ie. y=3. Put (1,3) in the list. Now backtrack to the last point of decision and choose again, this time y=4. Put (1,4) in the list. Now backtrack again. There are no more options for y so we backtrack all the way back to a again and set x=1, and now start again with y=3, and so on.

Now we can reconsider test4 in a two player game. Think about the choices from the point of view of working through all of P's options while fixing one particular set of choices for S. (It took me a while to get this concept so I'll try to explain this a few different ways.) So P chooses b=3 and then S makes some choice, say a=1. Now we backtrack to P's next option, b=4. But on backtracking to P's last choice we backtracked through S's choice and on running forward again, we must make some choice for S, say a=1 again. There were 2 ways P could have played, so we end up with two distinct sequences of plays: (3,1) and (4,1) after P has worked through all of his options. We have a list with two possibilities: [(3,1),(4,1)]. But S could have played differently. In fact, because we play S's move twice for each sequence of P's moves there are three other ways this sequence could have come out, depending on S's strategy: [(3,1),(4,2)], [(3,2),(4,1)] or [(3,2),(4,2)]. And that is exactly what test4 gave: for each strategy that S could have chosen we get a list of all the ways P could have played against it. We get a list of 4 lists, each of which has two elements. Each of those elements is a sequence of plays.

I'll try to make precise what I mean by 'strategy'. By strategy we mean a way of choosing a move as a function of the moves your opponent has made. That's what we mean by a program t play a game: we give it a set of inputs and it then responds with a move. Its move at the nth turn is a function of everything you did for the previous n-1 turns. So consider the example above. After P has chosen from [3,4], S must choose from [1,2]. So S's strategy can de described by a function from [3,4] to [1,2]. There are 4 such functions. Once we've fixed S's strategy, there are 2 possible ways S can play against it. Again, we have 4 strategies, and 2 plays for each strategy.

And here's another way of looking at this. We'll go back to simple games where the set of options available at each stage are constant and independent of the history of the game. Let <A,B> mean a game where A is the set of S's strategies and B is the set of ways P can play against those strategies. (The set B doesn't depend on S's choice from A because we're now limited to 'simple' games.) Now introduce a binary operator * such that <A,B>*<C,D> is the game where the moves start off being those in <A,B> but when that's finished we move onto <C,D>. In <A,B>*<C,D>, P's options are simply B×D. So we know that in some sense <A,B>*<C,D>=<X,B×D> for some X. Now S's first move is from A so that bit's easy. S's second move from C depends on P's move from B. So S's second move is described by a function from B to C. So <A,B>*<C,D>≅<A×C

B,D>. (I use ≅ because I mean 'up to isomorphism'. C

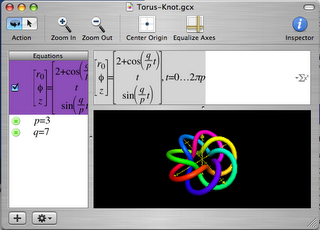

B×A also describes S's strategies equally well.) * is a curious binary operation. It might not look it, but it's associative (up to ismorphism). And it has a curious similarity to the

semidirect product of groups. Anyway, we can write test4 using this notation. It's computing <[1],[3,4]>*<[1,2],[1]> (up to isomorphism). [1] is just a convenient way of saying "no choice" and it gives us a way to allow P to move first my making S's first move a dummy one. Multiplying gives <[1,2]

[3,4],[3,4]>. [1,2]

[3,4] has 4 elements. So again we have 4 strategies with two ways to play against each of those strategies.

It's interesting to look at <A,B>*<C,D>*<E,F>. This is <A×C

B,B×D>*<E,F> which is <A×C

B×E

B×D,B×D×F>. This has a straightforward explanation: S gets to choose from A directly, to choose from C depending on how P plays from B and to choose from E depending on how P plays from both B and D.

And yet another way of looking at things. Suppose S and P are going to play in a chess tournament with S playing first. Unfortunately, before setting out on the journey to the tournament S realises he’s going to be late and is going to be unable to communicate during the journey. What should S do? A smart move would be to email his first move to the tournament organisers before leaving on the journey. That would buy him time while P thinks of a response. Even better, S could additionally send a list of second moves, one for each move that P might take in his absence. Going further, S could send a list of third moves, one for each of the first two moves that P might take in his absence. These emails, containing these lists, are what I’m calling a strategy. When you enumerate a bunch of options in the

ListT [] monad you end up enumerating over all strategies for S and all responses for P.

It appears there’s a kind of asymmetry. Why are we considering strategies for S, but plays for P? This is partly explained by the above paragraph. In

ListT [] there is an implicit ordering where S’s moves are considered to be before P’s. So if one of S’s choices comes after P’s, it needs to be pulled before P’s and that can only happen if it’s replaced with a strategy. There’s also another interesting aspect to this. When considering how to win at a game, you don’t need to test your strategy against every possible strategy your opponent might have. You only need to test it against every possible way your opponent could play against it and they only need to find one play to show your strategy isn’t foolproof. So

ListT [] describes exactly what you need to figure out a winning strategy for a game.

Anyway, that’s enough theory. Here an application. The following code computes a winning strategy in the game of Nim. (If you don’t know Nim, then you’re in for a treat if you read up on it

here. The theory is quite beautiful, but I deliberately don't use it here.) It uses brute force to find a winning strategy for S and additionally outputs a proof that this is a winning strategy by listing all possible ways the game could play out. Note that I’m using the

WriterT String (ListT []) monad so I can log the moves made in each game.

I've also added another game, a variant of

Kayles that I call Kayles'. In Kayles' there are n skittles in a row. On your turn you knock over a skittle and whichever of its immediate neighbours are still standing. The winner is the person who knocks over the last skittle. (The code describes the rules better than English text and you can adapt the code easily to play on any graph.)

Evaluate

nim or

kayles' to get a display of a winning strategy and a proof that it wins ie. a list of all possible games against that strategy showing they lead to P losing.

> strategies moves start = do

> a <- lift $ lift $ moves start

> tell $ [a]

> let replies = moves a

> if replies==[] then return () else do

> b <- lift $ mlist $ replies

> tell $ [b]

> strategies moves b

> nim = mapM print $

> runListT $ execWriterT $ strategies moves start

> where

> start = [2,3,5]

> moves [] = []

> moves (a:as) = [a':as | a' <- [0..a-1]]

> ++ [a:as' | as' <- moves as]

> kayles' = print $ head $

> runListT $ execWriterT $ strategies (kmoves (nbhd verts)) verts

> where

> kmoves nbhd v = [v \\ nbhd i | i <- v]

> verts = [1..13]

> nbhd verts i = intersect verts [i-1,i,i+1]

For example nim starts [[[2,3,1],…]] because a move to piles of size 2, 3 and 1 is a win in Nim. (2⊕3⊕1=0 for those who know the theory.) kayles' starts [[[1,2,3,4,5,9,10,11,12,13],…]] which means a winning move is to knock down skittles 6, 7 and 8 (you may see why symmetry makes this an obvious good opening move).

DISCLAIMER: I'm not claiming this is a

good algorithm for solving games. Just that it illustrates the meaning of

ListT [].

Finally a question. Are all monad transformers a form of semidirect product?

Footnotes:

[1] I haven't worked out the details but ListT [] probably isn't actually a monad. But it's close enough. It's probably associative up to ordering of the list, and as I'm using List as a poor man's Set monad

2, I don't care about ordering. An alternative ListT is provided

here. I'm not sure that solves the problem.

[2] I'm pretty sure Set can't be implemented in Haskell as a monad. But that's another story.