Back in the 80s when I was a kid I came across a program for the BBC Micro that could tell what card you had picked from a deck of cards even though you'd buried your card within the deck wherever you wanted and had cut and shuffled the deck. I thought I'd try to implement a slightly more sophisticated version of the same trick that could handle multiple shuffles, and multiple types of shuffle.

The idea is that we prepare a deck of cards in some known sequence and have someone pick out a card and place it at the top of the deck. They perform some kind of randomisation procedure on the cards, eg. cut and shuffle it a couple of times, and then you get to look at the final sequence of cards. Can we tell which card was picked out?

Some probability theory

Let's formalise this a bit. Our decks will have

We want to figure out the initial state given some final state

We can use Bayes theorem to get:

Now

where the sum is over all

Each shuffle

Some computer science

I'd like to be able to chain a sequence of shuffles. But wait! There's a catch! Today's the day I finally want to get around to checking out the lambda expression support in C++. I've been putting this off for years. (I'm using gcc 4.7.) So I'm not going to have a Haskell non-determinism monad to make life easy.

Suppose I have two types of shuffle, type

There's another way of looking at this. You can skip this paragraph if you don't care about the connection to Haskell. But in Haskell you might do something like this by using a non-determinism monad, or even a probability monad. But as I pointed out a while back, you can fake every monad using the continuation monad. One way to implement continuations in C++ is to use continuation passing style. And that's what I'll do. The continuations are just the lambdas that I mentioned in the previous paragraph.

Some C++ code

> #include <iostream> > #include <cstdlib> > using namespace std;You can bump this up the deck size if you have the CPU power:

> const int deck_size = 13;A deck of cards is represented by a simple array of integers with each card being assigned a unique integer.

> struct Deck {

> int card[deck_size];

> bool operator==(const Deck &other) {

> for (int i = 0; i < deck_size; ++i) {

> if (card[i] != other.card[i]) {

> return false;

> }

> }

> return true;

> }

> };

The riffle shuffle works by splitting a deck into two piles and interleaving the parts onto a new destination deck. Here's a schematic diagram with the two piles coloured orange and blue:

The function riffle_helper helps loop through all possible riffles. I could assume that each card arriving at the destination is equally likely to come from the left pile or the right pile. But I observe that whenever I do a real riffle shuffle the cards seem to come in 'runs'. So if a card falls from the left pile then the next one is more likely to as well. That's just an empirical observation based on a small number of trials, you can tweak the probabilities yourself to fit reality better. (Oh, and I got this code upside-down compared to what people really do. I need to fix it when I have a moment...)

> enum Side {

> LEFT,

> RIGHT,

> NO_SIDE

> };

This function shuffles together cards from the locations given by left_ptr and right_ptr in src_deck into dest_deck, eventually calling cont on each result.

I use a template because I don't know the type of the lambda expression I'm passing in.

(If I want to know its type I think I have to mess with decltype. It's all a bit weird.)

> template<class Cont>

> void riffle_helper(double importance, int split,

> int left_ptr, int right_ptr, int dest_ptr, Side oldside,

> const Deck &src_deck, Deck dest_deck, Cont cont) {

> if (dest_ptr == deck_size) {

> cont(importance, dest_deck);

> return;

> }

First I deal with the cases where one or other of the piles is empty so there's no choice about where the next card is coming from:

> if (left_ptr >= split) {

> dest_deck.card[dest_ptr] = src_deck.card[right_ptr];

> riffle_helper(importance, split, left_ptr, right_ptr+1, dest_ptr+1, RIGHT, src_deck, dest_deck, cont);

> return;

> }

> if (right_ptr >= deck_size) {

> dest_deck.card[dest_ptr] = src_deck.card[left_ptr];

> riffle_helper(importance, split, left_ptr+1, right_ptr, dest_ptr+1, LEFT, src_deck, dest_deck, cont);

> return;

> }

> double p;

> if (oldside == NO_SIDE) {

> p = 0.5;

> } else {

> p = LEFT == oldside ? 0.75 : 0.25;

> }

> double new_importance = importance*p;

> dest_deck.card[dest_ptr] = src_deck.card[left_ptr];

> riffle_helper(new_importance, split, left_ptr+1, right_ptr, dest_ptr+1, LEFT, src_deck, dest_deck, cont);

> if (oldside == NO_SIDE) {

> p = 0.5;

> } else {

> p = RIGHT == oldside ? 0.75 : 0.25;

> }

> new_importance = importance*p;

> dest_deck.card[dest_ptr] = src_deck.card[right_ptr];

> riffle_helper(new_importance, split, left_ptr, right_ptr+1, dest_ptr+1, RIGHT, src_deck, dest_deck, cont);

> }

The function riffle iterates over all possible riffle shuffles of src_deck calling cont on each one.

Note that I assume that when the deck is split into two before shuffling together, each pile has at least 3 cards.

You may want to change that assumption.

> template<class Cont>

> void riffle(double importance, const Deck &src_deck, Cont cont) {

> double new_importance = importance/(deck_size-5);

> for (int split = 3; split < deck_size-2; ++split) {

> riffle_helper(new_importance, split, 0, split, 0, NO_SIDE, src_deck, Deck(), cont);

> }

> }

Iterate over all possible cuts of src_dec calling cont on each result.

I assume the cut leaves at least 3 cards in each pile.

> template<class Cont>

> void cut(double importance, const Deck &src_deck, Cont cont) {

> double new_importance = importance/(deck_size-5);

> for (int split = 3; split < deck_size-2; ++split) {

> Deck new_deck;

> for (int i = 0; i < deck_size; ++i) {

> if (i < deck_size-split) {

> new_deck.card[i] = src_deck.card[i+split];

> } else {

> new_deck.card[i] = src_deck.card[i-(deck_size-split)];

> }

> }

> cont(new_importance, new_deck);

> }

> }

Overhand shuffle remaining cards in src_deck to dest_deck.

Here's an attempt to represent what an overhand shuffle does.

It reverses the order of a deck that has been split into segments.

The order within each segment is left unchanged.

> template<class Cont>

> void overhand_helper(double importance, const Deck &src_deck,

> int cards_left, Deck dest_deck, Cont cont) {

> if (cards_left <= 0) {

> cont(importance, dest_deck);

> } else {

> double new_importance = importance/cards_left;

> for (int ncards = 1; ncards <= cards_left; ++ncards) {

> //

> // Take i cards from the source and place them at the bottom of the

> // destination.

> //

> for (int j = 0; j < ncards; ++j) {

> dest_deck.card[cards_left-ncards+j] = src_deck.card[deck_size-cards_left+j];

> }

> overhand_helper(new_importance, src_deck, cards_left-ncards, dest_deck, cont);

> }

> }

> }

Iterate over all possible overhand shuffles of cards in src_deck calling cont on each result.

In practice I often find overhand shuffles result in cards mysteriously jumping segments and messing up the algorithm, whereas poorly executed riffle shuffles still work fine.

I'm also assuming that each time a pile of cards is transferred the size of the pile is chosen uniformly from the set of all possible segments at that stage.

> template<class Cont>

> void overhand(double importance, const Deck &src_deck, Cont cont) {

> overhand_helper(importance, src_deck, deck_size, Deck(), cont);

> }

The final code doesn't bother computing the denominator from Bayes' theorem.

The most likely initial state is given by the one that results in the highest score.

If you normalise the scores to sum to one you'll get actual probabilities.

> int main() {

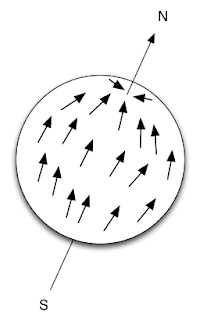

This is the array representation of the cards in the following picture:

> Deck target = {{ 10, 11, 6, 12, 1, 13, 8, 2, 9, 3, 5, 4, 7 }};

> Deck deck;Our known starting sequence is just 1, 2, 3, ..., J, Q, K. We iterate over all ways to pick a card out from this sequence and place it at the top.

> for (int k = 0; k < deck_size; ++k) {

> deck.card[0] = k+1;

> for (int i = 1; i < deck_size; ++i) {

> deck.card[i] = (i > k ? i : i-1)+1;

> }

> double likelihood = 0.0;

Here is where I use the lambdas.

For this example I'm doing an overhand shuffle followed by a riffle shuffle.

(The syntax is pretty bizarre and its also weird that I kinda sorta specify the type of my lambda but that's not really what the type of the expression is. But having manually faked and lifted lambdas many times in C++ I can see why it's the way it is.) Note how I've made likelihood mutable and have given these lambda expressions write access to it.

> overhand(1.0, deck, [&likelihood, target](double importance, Deck &deck) -> void {

> riffle(importance, deck, [&likelihood, target](double importance, Deck &deck) -> void {

> if (deck == target) {

We sum the probabilities for all ways of generating the target deck:

> likelihood += importance; > } > }); }); > cout << "If top card = " << deck.card[0] << endl; > cout << "then unnormalised probability = " << likelihood << endl; > cout << endl; > }Run the above code and you get unnormalised probabilities

> }

If top card = 4 then unnormalised probability = 5.7568e-12 If top card = 6 then unnormalised probability = 5.37301e-11 If top card = 7 then unnormalised probability = 1.791e-11In fact, I had chosen 6. Some discussion

Don't expect it to work perfectly! It can only give probabilities but it's often surprisingly good. But there is a lot of room for improvement. Some work looking at how people actually shuffle could give a better probabilistic model.

Some exercises.

1. The code can be made orders of magnitude faster. The final shuffle is performed and then the result is compared to the target sequence. But you can start comparing cards with the target before the shuffle is finished. Most times you'll only need to look at the first card of the result of a shuffle before you know you haven't matched the target. Fixing this will give a big speed up.

2. The continuation passing style makes it easy to incorporate other sources of knowledge. For example if you 'accidentally' peek at the bottom card after the first shuffle you can incorporate that knowledge into the algorithm. Figure out how.

3. Write lots more kinds of shuffles and experiment. I'm hoping someone good with magic will come up with a sequence of operations that looks hopelessly random but allows a good probability of recovering the chosen card. You could also combine this with other techniques such as designing shuffles that maintain various kinds of invariant.

4. The code can be rewritten to work backwards from the final state to the initial states. Work out how to do this. (This parallels ray-tracing where you can work from eye to light or from light to eye.)

5. We're doing the same work over and over again. We don't need to compute all of the shuffles for each initial state. We can compute each shuffle once and reuse it on each initial state. Try implementing it.