Isospin

The idea behind symmetry in physics is that there are things that you can change without making a difference to how a system evolves. I've already given the example of how rotating a pool table has no effect on the physics of the game. But here's another very different symmetry: in particle accelerator events that involve only the strong force, it makes no difference whether you're working with protons or neutrons. The strong force is unable to distinguish between these two types of particle. So we have a nice symmetry: for each proton or neutron in a system, we have a group of size two corresponding to the fact that we can swap one for the other. Now clearly protons and neutrons are different, they have different charges, but that only makes a difference to electromagnetic interactions, not strong interactions. This symmetry is called isospin symmetry.

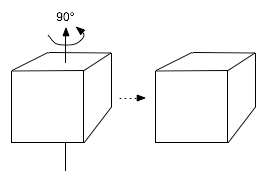

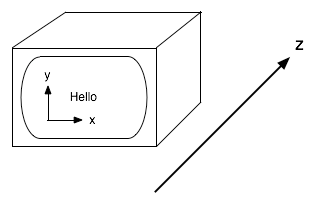

But it gets more interesting when we consider quantum mechanics. Represent a proton by a vector (1,0) and a neutron by a vector (0,1). Quantum mechanics allows us to form superpositions by adding linear combinations of physical states to get new ones. So if (1,0) is a physical state, and (0,1) is also one, then so is (0.5,0.5). This is like the Schrödinger Cat state, it's a mixture of proton and neutron. In fact, we can form states (a,b) for any complex numbers a and b. So now we have scope for even more symmetry because not only should neutrons and protons be interchangeable, any mixture should be interchangeable with any other. What Heisenberg proposed was this: maybe the proton and neutron, considered as 2D vectors, have rotational symmetry just like the pool table. (Well, I don't think he was thinking in terms of pool tables.) Because we're dealing with complex numbers he proposed a symmetry group called SU(2), instead of SO(2), but it's a similar kind of thing, just the complex number variation of the 2D rotation group. SU(2) acts on the (complex) 2D space spanned by the proton vector (1,0) and the neutron state (0,1) and so this space forms what I previously called a Lie group representation. The proton and neutron form a 2-dimensional representation of SU(2).

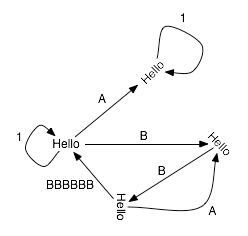

So let's think about this more generally. If we have n particles that we suspect are connected by some symmetry group G, then they must form an n-dimensional representation of the group. Such a collection of particles is also called a multiplet. But here's the cool thing: if we have some kind of classification theorem listing the kinds of symmetry groups we expect to see in nature, and if we have a theorem classifying all of their representations, then we expect the particles of nature to be collected into families of similar particles that are organised just like those representations. So just knowing some pure mathematics should tell us a lot about particle physics.

Simple Lie Groups and E8

I've already said a little about how mathematicians go about the process of classifying representations of Lie groups by looking at maximal tori. Now I want to look at how Lie groups themselves are classified. Lie groups can often be built out of smaller pieces. This suggests the idea of finding the basic building blocks which can't be built out of simpler Lie groups. For a large class of groups, the basic building blocks are known as the simple Lie groups. (Now you know what the "Simple" in the title of Garrett Lisi's paper is about.) A priori you might imagine that you have all kinds of freedom when you try to construct new Lie groups. Incredibly you don't, and the list of simple Lie groups is quite...well...simple. The Lie groups fall into seven families called A, B, C, ..., G. The individual members of these families are given names like C4, where the integer is the dimension of the maximal torus. Four of the families, A to D, are fairly straightforward, and the groups form infinite sequences A1, A2, A3 and so on. Some of these are familiar. For example the B series are the rotation groups in odd dimensional spaces. B1 is just another name for SO(3). But curiously, the other three families, E, F and G, are finite. These are known as the exceptional Lie groups. The complete list is E6, E7, E8, F4 and G2. (What's even more exceptional about these is that many other branches of mathematics have exceptional objects too, and they're closely related to these. And now you know what 'exceptional' in that title means.) E8 is the highest dimensional of the exceptional simple Lie groups. In other words, you can think of it as the largest possible symmetry we could have that isn't simply built out of smaller pieces, or isn't just part of a straightforward sequence of symmetries. But it's not 'simple' in the ordinary sense of the word, it's 248-dimensional.

So if we could arrange the particles of nature into a family of size 248 then we'd have strong evidence that E8 was the symmetry of nature. This is what Lisi has attempted to do.

More Spontaneosly Broken Symmetry

But there's a complication. Above I talked out the SU(2) symmetry of protons and neutrons. But we know that symmetry doesn't hold exactly because the electromagnetic force doesn't respect it. In fact, photons react to protons and neutrons quite differently. But there's a way out: broken symmetry. In earlier posts I've already talked about this in the case of pool. But now consider a system like a sealed container of water. Again, fundamental principles suggest that this should have SO(3) symmetry. But we know that if we tilt a container of water the water doesn't tilt with the container, the level of the surface remains aligned perpendicular to gravity. On the other hand, as we heat up the water it eventually boils. At that point it's hard to tell gravity is at work at all because the force of gravity is so small compared to the intermolecular forces at work in a gas. The higher the temperature, the harder it is to see the local gravity. So pumping energy into a system serves to remove any kind of local accidents of history to reveal the true symmetry. The idea in particle physics is that at low energies we see a reduced symmetry, but at higher energies we should see more symmetries coming into play. In fact, this idea works incredibly well and in 1979 the Nobel prize was awarded to Glashow, Salam and Weinberg for realising that at higher energies the reduced symmetry of electromagnetism (which, by the way, is SO(2)) could be seen as part of a bigger symmetry. So what we expect to see is a chain of subgroups, one contained in the next, corresponding to physics at different energies. In practice physicists use Lie algebras instead of Lie groups most of the time because infinitesimal operations are usually much easier to work with, but the principle is the same.

So now I can finally say what Lisi has attempted to do: he has arranged all of the particles of nature to form a representation of E8. He has then found subgroups of E8 corresponding to the breaking of this symmetry. At low energies, the different forces of nature see different symmetries corresponding to these subgroups. As these subgroups correspond to less symmetry, we end up with smaller sets of particles that are interchangeable with each other, and so smaller multiplets. So the multiplets of E8 break up into smaller multiplets corresponding to representations of these subgroups. Lisi has shown how to arrange the particles of nature into multiplets, and has also shown how to break up these multiplets into smaller ones that correspond to the properties of the familiar fundamental forces we can observe in the lab. There are all kinds of problems with the way he's done this which makes the work controversial. But in spirit, it's not so different from what physicists have been doing for decades.

.png)