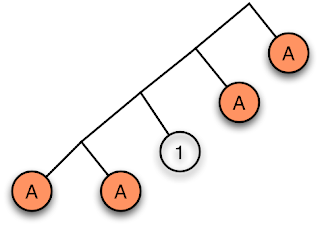

Last time we looked at a method for testing whether propositions of provability logic were valid by looking at the consequences of propositions within nested collections of worlds. This lends itself naturally to an algorithm that we can implement in Haskell. The diagrams I used last time are a variant on what are known as tableaux. But tableaux can be used in a number of ways and so we need code that is suitably generalised. In the code that follows I've ensured that the core algorithm has an interface that is suitable for carrying out the four tasks I'll demand of it. This means that the code leans more towards practicality than mathematical elegance.

And I apologise in advance: this post is mostly a bunch of implementation details. We'll get back to some mathematics next time. Nonetheless, by the end of this post you'll have working code to test the validty of propositions of provability logic.

Central to tableau algorithms is pattern matching. I'd like the Haskell pattern matcher do much of the work, and to increase its flexibility I'll need this extension:

> {-# LANGUAGE ViewPatterns #-}

We'll need these libraries too:> import Control.Applicative > import Control.Arrow > import Control.Monad > import Data.Function > import List > import Maybe > import Text.ShowLogical Propositions

Now we'll need a bunch of logical operators. The first three are constructors for a proposition type and the rest are sugar to make expressions look nicer:

> infixr 1 :-> > infixr 2 :\/ > infixr 3 :/\ > infixr 1 --> > infixr 1 <-- > infixr 1 <-> > infixr 2 \/ > infixr 3 /\ > (\/) = (:\/) > (/\) = (:/\) > (-->) = (:->) > (<--) = flip (:->) > p <-> q = (p :-> q) :/\ (q :-> p)Here's our basic proposition type:

> data Prop = Letter String > | Prop :\/ Prop > | Prop :/\ Prop > | Prop :-> Prop > | Box Prop > | Dia Prop > | F > | T > | Neg Prop deriving (Eq, Ord)I want show to know about operator precedence for propositions:

> instance Show Prop where > showsPrec p (a :/\ b) = showParen (p>3) $ showsPrec 3 a . showString " /\\ " . showsPrec 3 b > showsPrec p (a :\/ b) = showParen (p>2) $ showsPrec 2 a . showString " \\/ " . showsPrec 2 b > showsPrec p (a :-> b) = showParen (p>1) $ showsPrec 1 a . showString " --> " . showsPrec 1 b > showsPrec p (Neg r) = showParen (p>4) $ showString "Neg " . showsPrec 5 r > showsPrec p (Box r) = showParen (p>4) $ showString "Box " . showsPrec 5 r > showsPrec p (Dia r) = showParen (p>4) $ showString "Dia " . showsPrec 5 r > showsPrec p (Letter n)= showParen (p>5) $ showsPrec 6 n > showsPrec p T = showString "T" > showsPrec p F = showString "F"Some simple rules for simplification of some logical expressions:

> simplify p = let simplify' (a :\/ F) = a > simplify' (F :\/ b) = b > simplify' (a :/\ T) = a > simplify' (T :/\ b) = b > simplify' (a :\/ T) = T > simplify' (T :\/ b) = T > simplify' (a :/\ F) = F > simplify' (F :/\ b) = F > simplify' (F :-> b) = T > simplify' (T :-> b) = b > simplify' (a :-> F) = Neg a > simplify' (a :-> T) = T > simplify' (Neg T) = F > simplify' (Neg F) = T > simplify' (Box T) = T > simplify' (Dia F) = F > simplify' z = z > in case p of > a :/\ b -> let a' = simplify a > b' = simplify b > in simplify' (a' :/\ b') > a :\/ b -> let a' = simplify a > b' = simplify b > in simplify' (a' :\/ b') > a :-> b -> simplify' (simplify a :-> simplify b) > Box a -> simplify' (Box (simplify a)) > Dia a -> simplify' (Dia (simplify a)) > Neg (Neg a) -> simplify a > a -> aKinds of Proposition

I'm actually going to use more than one proposition type. So when we do case analysis I need to make my patterns more abstract so they can work with multiple types. I'm going to use the PropType type to represent the ways we're going to classify logical propositions. The types are:

1. Atomic: A single letter or its negation

2. Constant: Simply T or F or a negation thereof.

3. DoubleNegation.

4. Disjunction: used to represent things like a∧b or ¬(a∨b).

5. Conjunction: used to represent things like a∨b or ¬(a∧b).

6. Provability: These are statements about provability like those starting with ◻ or ¬◊.

7. Consistency: These are statements about consistency like those starting with ◊ or ¬◻.

As this type is a simple container we can make it a functor too.

> data PropType a = Atomic a > | Constant a > | DoubleNegation a > | Disjunction a a > | Conjunction a a > | Provability a > | Consistency a > instance Functor PropType where > fmap f (Atomic a) = Atomic (f a) > fmap f (Constant a) = Constant (f a) > fmap f (DoubleNegation a) = DoubleNegation (f a) > fmap f (Provability a) = Provability (f a) > fmap f (Consistency a) = Consistency (f a) > fmap f (Conjunction a b) = Conjunction (f a) (f b) > fmap f (Disjunction a b) = Disjunction (f a) (f b)I'll introduce a typeclass that will allow us to use the PropType view to query propositions:

> class PropTypeable a where > propType :: a -> PropType a > neg :: a -> a > isF :: a -> Bool > negative :: a -> Bool > positiveComponent :: a -> PropAnd now here we have the cases that I summarised in English above:

> instance PropTypeable Prop where > propType (a :\/ b) = Disjunction a b > propType (a :/\ b) = Conjunction a b > propType (Neg (a :\/ b)) = Conjunction (Neg a) (Neg b) > propType (Neg (a :/\ b)) = Disjunction (Neg a) (Neg b) > propType (a :-> b) = Disjunction (Neg a) b > propType (Neg (a :-> b)) = Conjunction a (Neg b) > propType (Neg (Neg a)) = DoubleNegation a > propType (Box a) = Provability a > propType (Neg (Box a)) = Consistency (Neg a) > propType (Dia a) = Consistency a > propType (Neg (Dia a)) = Provability (Neg a) > propType (Letter a) = Atomic (Letter a) > propType (Neg (Letter a)) = Atomic (Neg (Letter a)) > propType T = Constant T > propType F = Constant F > propType (Neg F) = Constant T > propType (Neg T) = Constant F > neg = Neg > isF F = True > isF (Neg T) = True > isF _ = False > positiveComponent (Neg a) = a > positiveComponent a = a > negative (Neg _) = True > negative _ = FalseIt'll be a while before we need the full generality so it's going to seem like overkill for the moment!

And some pre-packaged letters for convenience:

> [a, b, c, d, p, q, r, s, t] = map (Letter . return) "abcdpqrst"We're going to need some operations that act on lists.

placesWhere finds all of the elements of a list for which some predicate holds. Instead of just listing the elements that match, it lists the elements paired with the rest of the list after the matching element is removed. We can think of these pairs as elements and their surrounding context:

> placesWhere p [] = [] > placesWhere p (x:xs) = let r = map (second (x:)) $ placesWhere p xs > in if p x then ((x, xs) : r) else rThis finds something in the intersection of two sets using a given 'equality' predicate for matching. As we may be using a predicate different from == we need to see both of the (possibly different) elements that satisfy the predicate.

> findIntersection eq a b = listToMaybe [(x, y) | x <- a, y <- b, x `eq` y]Sometimes we'll meet propositions that we can start to reason about using ordinary propositional calculus. These will match the propositional predicate:

> propositional (propType -> DoubleNegation _) = True > propositional (propType -> Conjunction _ _) = True > propositional (propType -> Disjunction _ _) = True > propositional _ = FalseOn the other hand the provability predicate is used to identify propositions that need rules pertaining to provability and consistency:

> provability (propType -> Provability _) = True > provability (propType -> Consistency _) = True > provability _ = FalseThe Algorithm

And now we're almost ready to implement the tableau rules. Because we'll be using tableaux in a number of different ways I need lots of hooks into the algorithm that can perform different operations. I've collected all of these hooks into a single type. The algorithm will take a proposition of type prop (which will be Prop for the first three cases) and produce something of type result.

> data TableauRules prop result = TableauRules {

If our result corresponds to a world that is self-contradictory it is said to close. Here's how we indicate a closed (and hence not really existing) world. In the simplest case we won't actually store any information about a world, just whether or not it closes. So closes will be the identity function:> closes :: result -> Bool,Occasionally we'll find a world with something obviously equivalent to F in it. It closes. Here's what we want to return in that case. The argument is the offending proposition:

> foundF :: prop -> result,Sometimes we'll find a pair that obviosuly contradict, like a and ¬a:

> foundContradiction :: (prop, prop) -> result,And sometimes we'll find an open world (ie. a real non-closed one). This function gets handed the list of propositions that have been found to hold in it:

> open :: [prop] -> result,Here's what we do when we find a conjunction. I hope you remember that when we meet a conjunction we can delete it and replace it with the two subpropositions. conjRule is handed the subpropositions as well as the result from proceeding with the tableau rule for conjunctions:

> conjRule :: prop -> prop -> result -> result,Disjunctions work a little differently. When handling a∨b, say, we need to handle two subtableaux, one with a and one with b. The first argument to disjRule rules is the disjunction itself, the next two are the left and right subpropositions, and the last two arguments are the results of continuing the two subtableaux.

> disjRule :: prop -> prop -> prop -> result -> result -> result,With doubleNegation we get to see propositions that have undergone double negation elimination.

> doubleNegation :: prop -> result -> result,When we use Dia to open new worlds we need to ensure that each of these subworlds is valid. Each subworld is processed with processWorld . For example, when we're drawing tableau diagrams we can use this book to draw a frame around the subtableaux. We then fold together these subworlds using combineWorlds:

> processWorld :: prop -> result -> result, > combineWorlds :: result -> result -> result,Lastly we have our driver function that kicks off the whole tableau algorithm on a list of propositions:

> tableau :: [prop] -> result -> result > }We can now implement a tableau computation algorithm that supports these hooks. We'll start with the check for whether or not we have an immediate contradiction:

> simpleClosure rules ps = case find isF ps of > Just a -> foundF rules aSplit the propositions into those that are negated and those that are not. We're looking for propositions in one part that directly contradict propositions in the other:

> Nothing -> > let (neg, pos) = partition negative ps > maybePair = findIntersection ((==) `on` positiveComponent) neg pos > in case maybePair of > Just pair -> foundContradiction rules pair > Nothing -> open rules psDouble negation elimination is straightforward to apply. Delete the original proposition and replace it with the version without double negation:

> applyDNeg rules p a props = doubleNegation rules a $ > applyPropositional rules (a : delete p props)The rule for handling conjunctions. We delete the conjunction from the current list of propositions and replace it with the two subpropositions:

> applyConj rules p a b props = conjRule rules a b $ > applyPropositional rules (a : b : delete p props)Disjunctions require running two separate subtableaux:

> applyDisj rules p a b props = > let props' = delete p props > left = applyPropositional rules (a : props') > right = applyPropositional rules (b : props') > in disjRule rules p a b left rightHere we tie together the rules for propositional calculus. We use a bit of case analysis to decide which rule to apply, and if no rule applies we try the provability rules instead:

> applyPropositional rules props = > let t = simpleClosure rules props in if closes rules t > then t > else case find propositional props of > Nothing -> applyProvability t rules props > Just p -> case p of > (propType -> DoubleNegation q) -> applyDNeg rules p q props > (propType -> Conjunction a b) -> applyConj rules p a b props > (propType -> Disjunction a b) -> applyDisj rules p a b propsWhen we've exhausted all possible rules from propositional calculus we scan for propositions like Dia p or Neg (Box p). These may imply the existence of subworlds. We then try to instantiate these subworlds, seeded according to the rules I gave in the previous article:

> applyProvability t rules props = > let impliedWorlds = placesWhere consistency props > consistency (propType -> Consistency _) = True > consistency _ = False > testWorld (p@(propType -> Consistency q), props) =In the following line, neg p corresponds to the application of Löb's Theorem. provabilities is the list of propositions inherited by a subworld from statements about provability in the parent:

> let tableau = runTableau rules (q : neg p : provabilities) > provabilities = do > p@(propType -> Provability q) <- props > [p, q] > in processWorld rules p tableau > in foldr (combineWorlds rules) t (map testWorld impliedWorlds)And finally, here's where we kick our algorithm off:

> runTableau rules props = tableau rules props $ applyPropositional rules propsTesting Validity

We can now use a set of simple rules to test the validity of propositions. As mentioned above, the return data is a Bool used to indicate whether a subworld was invalid. By and large, these rules do the trivial thing. Note how the rule for disjunction requires both of the alternatives to be invalid in order to completely invalidate, so we use (&&). But when considering subworlds, just one bad subworlds is enough to invalidate a world:

> validRules = TableauRules {

> closes = id,

> open = \_ -> False,

> foundF = \_ -> True,

> foundContradiction = \_ -> True,

> conjRule = \_ _ t -> t,

> disjRule = \_ _ _ -> (&&),

> doubleNegation = \_ t -> t,

> combineWorlds = (||),

> processWorld = \_ t -> t,

> tableau = \_ t -> t

> }

We can now write a simple validty test. We negate the proposition we're interested in and test whether the implied world closes:> valid p = runTableau validRules [neg p]Here's a small regression test to ensure everything works. It's just a bunch of examples that I worked out by hand or lifted from Boolos's book:

> valids = [ > T, > a :-> a, > Box a :-> Box a, > Box a :-> Box (Box a), > Box (Box a :-> a) :-> Box a, > Box F <-> Box (Dia T), > let x = p :/\ q :-> r :-> a in Box (Box x :-> x) :-> Box x, > F :-> Dia p, > Box (Dia p) :-> Box (Box F :-> F), > (Box F \/ q /\ Dia (Box F /\ Neg q)) <-> > (Dia (Box F \/ q /\ Dia (Box F /\ Neg q)) > --> q /\ Neg (Box (Box F \/ q /\ Dia (Box F /\ Neg q) > --> q))) > ] > invalids = [ > F, > a :-> Box a, > Box a :-> a, > Box (Box a :-> a) :-> a, > Dia T, > Box (Dia T), > Neg (Box F), > (Box F \/ p /\ Dia (Box F /\ Neg q)) <-> > (Dia (Box F \/ q /\ Dia (Box F /\ Neg q)) > --> q /\ Neg (Box (Box F \/ q /\ Dia (Box F /\ Neg q) > --> q))) > ]If everything is working, regress1 should give the result True.

> regress1 = do > print $ (and $ map valid valids) && > (and $ map (not . valid) invalids)That's enough implementation. In the next installment we'll start putting this code to work.

References

This code is an implementation of the algorithm described in Chapter 10 of The Logic of Provability. See that book if you want (1) a proof that the above algorithm always terminates and (2) that it really does correctly decide the validity of propositions of provability logic.